Short talk exploring how AI agents are being built, deployed, and scaled across industries.

talk-data.com

talk-data.com

Topic

AI/ML

Artificial Intelligence/Machine Learning

9014

tagged

Activity Trend

Top Events

Informed Consent Forms (ICFs) are critical documents in clinical trials. They are the first, and often most crucial, touchpoint between a patient and a clinical trial study. Yet the process of developing them is laborious, high-stakes, and heavily regulated. Each form must be tailored to jurisdictional requirements and local ethics boards, reviewed by cross-functional teams, and written in plain language that patients can understand. Producing them at scale across countries and disease areas demands manual effort and creates major operational bottlenecks. We used a combination of traditional AI and large language models to autodraft the ICF across clinical trial types, across countries and across disease areas at scale. The build, test, iteration and deployment offers both technical and non technical lessons learned for generative AI applications for complex documents at scale and for meaningful impact.

Large AI models have become powerful but increasingly impractical; with escalating training costs, bloated memory requirements, and latency bottlenecks that limit real-world deployments. This talk introduces CompactifAI: a quantum-inspired compression framework that uses tensor networks to surgically shrink large models while preserving their accuracy and capabilities.

Modern defense systems operate in environments that change by the second. To keep up, they need more than static maps and siloed data, they need true situational awareness. This talk explores how we are building a Responsible Human-Agent Ecosystem that combines high-resolution 3D geospatial data, real-time sensor fusion, and AI-driven agents to help mission-critical platforms understand the world the way humans do- but faster and at scale.

Most data science projects start with a simple notebook—a spark of curiosity, some exploration, and a handful of promising results. But what happens when that experiment needs to grow up and go into production?

This talk follows the story of a single machine learning exploration that matures into a full-fledged ETL pipeline. We’ll walk through the practical steps and real-world challenges that come up when moving from a Jupyter notebook to something robust enough for daily use.

We’ll cover how to:

- Set clear objectives and document the process from the beginning

- Break messy notebook logic into modular, reusable components

- Choose the right tools (Papermill, nbconvert, shell scripts) based on your workflow—not just the hype

- Track environments and dependencies to make sure your project runs tomorrow the way it did today

- Handle data integrity, schema changes, and even evolving labels as your datasets shift over time

And as a bonus: bring your results to life with interactive visualizations using tools like PyScript, Voila, and Panel + HoloViz

AI teams iterate at the speed of innovation, while organizations require platforms that are reliable, governed, and cost‑efficient. This session presents pragmatic patterns and reference architectures that align rapid development with production requirements—so data scientists and developers can move fast without breaking stability.

Designing an ML model is one thing; designing an ML system that actually solves a business problem is another.

This talk explores how ML system design bridges the gap between a model and a real solution. Through practical examples, we’ll look at how communication with stakeholders, understanding functional and non-functional requirements, and aligning optimization and evaluation with business needs determine whether an ML initiative succeeds or stalls.

We’ll highlight key decision points — from translating vague goals into measurable objectives to balancing model performance with constraints like latency, interpretability, and maintainability.

Attendees will walk away with a sharper view of what makes an ML system truly fit for its environment — and why good design matters as much as good modeling.

This session delivers a blueprint for building, deploying, and managing agents in a secure, scalable, and cost-effective manner on Google Cloud, bridging the critical gap between development and operations.

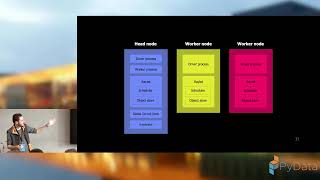

Python is the language of choice for anything to do with AI and ML. While that has made it easy to write code for one machine, it's much more difficult to run workloads across clusters of thousands of nodes. Ray allows you to do just that. I'll demonstrate how to implement this open source tool with a few lines of code. As a demo project, I'll show how I built a RAG for the Wheel of Time series.

Companies today are hungry for external data to stay competitive, but actually getting and making sense of that data isn’t easy. Standard web scraping often produces messy or incomplete results, and modern anti-bot systems make reliable collection even tougher.

In this talk, I’ll share how pairing Python’s scraping frameworks (like Scrapy, Playwright, and Selenium) with AI/ML can turn raw, unstructured data into clear, actionable insights.

We’ll look at:

1) How to build scrapers that still work in 2025.

2) Ways to use AI to automatically clean, enrich, and classify data.

3) Real-world applications of sentiment analysis for reviews and social media.

4) Case studies showing how SMEs have used these pipelines to sharpen marketing and product strategies.

By the end, you’ll see how to design pipelines that don’t just gather data, but deliver real strategic value. The session will focus on practical Python tools, scalable deployment (Airflow, Kubernetes, cloud platforms), and key lessons learned from hands-on projects at the intersection of scraping and AI.

Training one model is fun. Running thousands without everything catching fire? That’s the real challenge. In this talk, we’ll show how we — two data scientists turned accidental ML engineers — scaled anomaly detection at Vanderlande. Expect a peek into our orchestration setup, a quick code snippet, a look at our monitoring dashboard and how we scale to a thousand models.

At IKEA, retail planning is a complex chain of processes, from sales forecasting to fulfillment and capacity assessment, that involve multiple teams. Each team builds their own predictive models independently, yet their outputs depend on one another to ensure a concise planning chain.

In this talk, we will show how IKEA uses Metaflow, an open-source framework for building and managing real-life ML, to orchestrate and connect the forecasting pipelines for more than thirty countries. We’ll discuss how Metaflow helps align independent teams, improve readability, and enable reproducible workflows and scale.

You will leave with practical approaches for an aligned team workflow and concrete patterns for orchestrating ML/AI pipelines.

Prepare to revolutionize your data infrastructure for the AI era with Amazon EMR, AWS Glue, and Amazon Athena. This session will guide you through leveraging these powerful AWS services to construct robust, scalable data architectures that empower AI solutions at scale. Gain insights into effective architectural strategies for data processing to build AI applications, optimizing for cost-efficiency and security. Explore architectural frameworks that underpin successful AI-driven data initiatives, and learn from field lessons on how to navigate modernization projects. Whether you’re starting your modernization journey or refining current setups, this session offers practical strategies to fast-track your organization towards achieving excellence in AI-powered data management.

Learn more: More AWS events: https://go.aws/3kss9CP

Subscribe: More AWS videos: http://bit.ly/2O3zS75 More AWS events videos: http://bit.ly/316g9t4

ABOUT AWS: Amazon Web Services (AWS) hosts events, both online and in-person, bringing the cloud computing community together to connect, collaborate, and learn from AWS experts. AWS is the world's most comprehensive and broadly adopted cloud platform, offering over 200 fully featured services from data centers globally. Millions of customers—including the fastest-growing startups, largest enterprises, and leading government agencies—are using AWS to lower costs, become more agile, and innovate faster.

AWSreInvent #AWSreInvent2025 #AWS

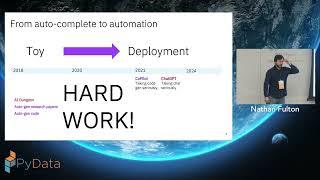

Agentic frameworks make it easy to build and deploy compelling demos. But building robust systems that use LLMs is difficult because of inherent environmental non-determinism. Each user is different, each request is different; the very flexibility that makes LLMs feel magical in-the-small also makes agents difficult to wrangle in-the-large.

Developers who have built large agentic-like systems know the pain. Exceptional cases multiply, prompt libraries grow, instructions are co-mingled with user input. After a few iterations, an elegant agent evolves into a big ball of mud.

This hands-on tutorial introduces participants to Mellea, an open-source Python library for writing structured generative programs. Mellea puts the developer back in control by providing the building blocks needed to circumscribe, control, and mediate essential non-determinism.

Unlocking the full potential of AI starts with your data, but real-world documents come in countless formats and levels of complexity. This session will give you hands-on experience with Docling, an open-source Python library designed to convert complex documents into AI-ready formats. Learn how Docling simplifies document processing, enabling you to efficiently harness all your data for downstream AI and analytics applications.

This tutorial tackles a fundamental challenge in modern AI development: creating a standardized, reusable way for AI agents to interact with the outside world. We will explore the Model Context Protocol (MCP) designed to connect AI agents with external systems providing tools, data, and workflows. This session provides a first-principles understanding of the protocol, by building an MCP server from scratch, attendees will learn the core mechanics of the protocol's data layer: lifecycle management, capability negotiation, and the implementation of server-side "primitives." The goal is to empower attendees to build their own MCP-compliant services, enabling their data and tools to be used by a growing ecosystem of AI applications.

PubMed is a free search interface for biomedical literature, including citations and abstracts from many life science scientific journals. It is maintained by the National Library of Medicine at the NIH. Yet, most users only interact with it through simple keyword searches. In this hands-on tutorial, we will introduce PubMed as a data source for intelligent biomedical research assistants — and build a Health Research AI Agent using modern agentic AI frameworks such as LangChain, LangGraph, and Model Context Protocol (MCP) with minimum hardware requirements and no key tokens. To ensure compatibility, the agent will run in a Docker container which will host all necessary elements.

Participants will learn how to connect language models to structured biomedical knowledge, design context-aware queries, and containerize the entire system using Docker for maximum portability. By the end, attendees will have a working prototype that can read and reason over PubMed abstracts, summarize findings according to a semantic similarity engine, and assist with literature exploration — all running locally on modest hardware.

Expected Audience: Enthusiasts, researchers, and data scientists interested in AI agents, biomedical text mining, or practical LLM integration. Prior Knowledge: Python and Docker familiarity; no biomedical background required. Minimum Hardware Requirements: 8GB RAM (+16GB recommended), 30GB disk space, Docker pre-installed. MacOS, Windows, Linux. Key Takeaway: How to build a lightweight, reproducible research agent that combines open biomedical data with modern agentic AI frameworks.

Transform Your Business with Intelligent AI to Drive Outcomes Building reactive AI applications and chatbots is no longer enough. The competitive advantage belongs to those who can build AI that can respond, reason, plan, and execute. Building Agentic AI: Workflows, Fine-Tuning, Optimization, and Deployment takes you beyond basic chatbots to create fully functional, autonomous agents that automate real workflows, enhance human decision-making, and drive measurable business outcomes across high-impact domains like customer support, finance, and research. Whether you're a developer deploying your first model, a data scientist exploring multi-agent systems and distilled LLMs, or a product manager integrating AI workflows and embedding models, this practical handbook provides tried and tested blueprints for building production-ready systems. Harness the power of reasoning models for applications like computer use, multimodal systems to work with all kinds of data, and fine-tuning techniques to get the most out of AI. Learn to test, monitor, and optimize agentic systems to keep them reliable and cost-effective at enterprise scale. Master the complete agentic AI pipeline Design adaptive AI agents with memory, tool use, and collaborative reasoning capabilities Build robust RAG workflows using embeddings, vector databases, and LangGraph state management Implement comprehensive evaluation frameworks beyond accuracy, including precision, recall, and latency metrics Deploy multimodal AI systems that seamlessly integrate text, vision, audio, and code generation Optimize models for production through fine-tuning, quantization, and speculative decoding techniques Navigate the bleeding edge of reasoning LLMs and computer-use capabilities Balance cost, speed, accuracy, and privacy in real-world deployment scenarios Create hybrid architectures that combine multiple agents for complex enterprise applications Register your book for convenient access to downloads, updates, and/or corrections as they become available. See inside book for details.

AI agents offer powerful capabilities and require thoughtful design to help manage risks. This session explores responsible agentic AI implementation with appropriate controls and governance. Understand some of the scientific frontiers that inform design considerations, including the language of AI agents, context management, agent interactions, and common sense reasoning. Learn approaches for human oversight, risk mitigation, evaluation methods, and control mechanisms to help align agent behaviors with organizational goals, and help make agentic AI both effective and trustworthy.

Learn more: More AWS events: https://go.aws/3kss9CP

Subscribe: More AWS videos: http://bit.ly/2O3zS75 More AWS events videos: http://bit.ly/316g9t4

ABOUT AWS: Amazon Web Services (AWS) hosts events, both online and in-person, bringing the cloud computing community together to connect, collaborate, and learn from AWS experts. AWS is the world's most comprehensive and broadly adopted cloud platform, offering over 200 fully featured services from data centers globally. Millions of customers—including the fastest-growing startups, largest enterprises, and leading government agencies—are using AWS to lower costs, become more agile, and innovate faster.

AWSreInvent #AWSreInvent2025 #AWS

In this session, we will introduce AWS Analytics Model Context Protocol (MCP) Servers, including the Data Processing MCP Server and Amazon Redshift MCP Server, which enable agentic workflows across AWS Glue, Amazon EMR, Amazon Athena, and Amazon Redshift. You will learn how these open-source tools simplify complex analytics operations through natural language interactions with AI agents. We'll cover MCP server implementation strategies, real-world use cases, architectural patterns for deployment, and production best practices for building intelligent data engineering workflows that understand and orchestrate your analytics environment.

Learn more: More AWS events: https://go.aws/3kss9CP

Subscribe: More AWS videos: http://bit.ly/2O3zS75 More AWS events videos: http://bit.ly/316g9t4

ABOUT AWS: Amazon Web Services (AWS) hosts events, both online and in-person, bringing the cloud computing community together to connect, collaborate, and learn from AWS experts. AWS is the world's most comprehensive and broadly adopted cloud platform, offering over 200 fully featured services from data centers globally. Millions of customers—including the fastest-growing startups, largest enterprises, and leading government agencies—are using AWS to lower costs, become more agile, and innovate faster.